Overview

Deep learning has become a prominent practice in the FinTech industry to supervise and/or automate critical decisions. More specifically, neural networks can e.g. be utilized to automate stock trading and characterize and detect fraudulent transactions.

This demonstration shows how to build a neural network using Chronicle Services where we let each service represent a layer in the network. Prior to putting together the Java representation, the network was trained using the Tensorflow Playground. The picture below illustrates the network configuration that is used throughout the demo.

Every step in the neural network has a distinct Chronicle service representation; a feature service, two hidden layer services, and a classifier service.

The services use biases and weights that were obtained by training the neural network until the test and training loss was stable at almost 0. Also, the network uses tanh as an activation function that is applied to the output of the hidden layers and the classifier.

|

Tip

|

To view the bias for a certain neuron, hover over the small square in the lower left corner of the neuron. To view its weight, instead hover over one of the connections between the layers. |

Configuration

Each service is connected via a queue that acts as the output for one layer, and as input for the next. Hence, the network described above requires a total of six queues – an input queue, one between each layer, and a queue the contains the classifications made by the neural network.

The following YAML file configures the described system:

!ChronicleServicesCfg {

queues: {

input: {

path: input,

sourceId: 1,

},

feature: {

path: feature,

sourceId: 2,

},

hiddenLayer0: {

path: hiddenLayer0,

sourceId: 3,

},

hiddenLayer1: {

path: hiddenLayer1,

sourceId: 4,

},

classification: {

path: classification,

sourceId: 5,

},

},

services: {

featureService: {

inputs: [ input ],

output: feature,

implClass: !type FeatureServiceImpl,

},

hiddenLayer0Service: {

inputs: [ feature ],

output: hiddenLayer0,

implClass: !type HiddenLayer0ServiceImpl,

},

hiddenLayer1Service: {

inputs: [ hiddenLayer0 ],

output: hiddenLayer1,

implClass: !type HiddenLayer1ServiceImpl,

},

classifierService: {

inputs: [ hiddenLayer1 ],

output: classification,

implClass: !type ClassificationServiceImpl,

},

},

}

The /service package contains the implementation of each of the layers. As an example, the neurons in the first hidden layer, HiddenLayer0ServiceImpl.java takes as input the values from the feature layer (an x and y-value). These values are manipulated by the function accumulate(final Feature in, final HiddenLayer0 out) using the weights and biases obtained from the Tensorflow Playground. Before being sent to the output queue, the values are also passed through the activation function.

public final class HiddenLayer0ServiceImpl

extends AbstractService<Feature, HiddenLayer0, HiddenLayer1Service>

implements HiddenLayer0Service {

private final Activation activation = StandardActivation.get();

public HiddenLayer0ServiceImpl(HiddenLayer1Service out) {

super(Feature.class, HiddenLayer0::new, out, HiddenLayer1Service::hiddenLayer0);

}

@Override

public void feature(final Feature feature) {

accumulateAndSend(feature);

}

@Override

void accumulate(final Feature in, final HiddenLayer0 out) {

// bias + input_x0 * weight_0 + input_x1 * weight_1

out.v0(activation.applyAsDouble(-1.4 + in.x0() * -0.24 + in.x1() * 0.69));

out.v1(activation.applyAsDouble(-1.2 + in.x0() * -0.45 + in.x1() * -0.49));

out.v2(activation.applyAsDouble(1.3 + in.x0() * 0.42 + in.x1() * 0.46));

out.v3(activation.applyAsDouble(-1.4 + in.x0() * 0.65 + in.x1() * -0.11));

}

}

|

Note

|

These biases and weights are particular to our configuration of the Tensorflow Playground. If you run your own training session, you may end up with different results. |

Each of the remaining service layers greatly resembles the implementation shown above.

Running the example

|

Note

|

If you are using Java 9 or later on Linux/Mac, first run the script j9setup.sh with the command source j9setup.sh. |

Start a new command line shell and run:

cd Chronicle-Services-Demo/Example13

mvn install -Dtest=IntegrationTest test

This will run the integration test located in IntegrationTest.java that uses the built classifier to classify points on a scale of -6.0 and 6.0 (for both x and y).

For those wishing to experiment with custom input, ServiceMain.java will run the Chronicle services and accepts input during runtime to the input-queue. Contents written to the input-queue will be mapped to features in the first layer and is then passed trough the neural network services.

To run the main service, use the following commands:

cd Chronicle-Services-Demo/Example13

mvn install exec:java@ServiceMain -DskipTests

Results

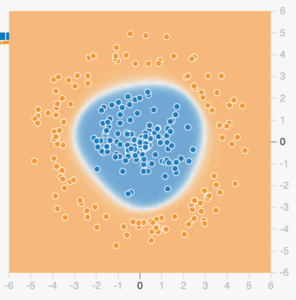

The neural network is trained using a circular dataset that classifies points in an area around origo as blue, and the surrounding points as yellow. The numerical representation of blue and yellow is positive and negative values.

Hence, the output from the integration test should include the following results:

It is easy to see the strong correlation between the test results, and the output obtained when running the simulation in the Tensorflow Playground.

To get a better understanding of how the individual layers operate, look at the output prior to the visual representation. Here we can trace how the service layers manipulates a single input point (0.1, -0.1) and finally yields a classification of around 0.999:

[!software.chronicle.services.demo.example13.dto.Classification {

eventId: "",

eventTime: 0,

c: 0.9998653695957538

}

]

NetworkTester{inputs=[!software.chronicle.services.demo.example13.dto.Input {

eventId: "",

eventTime: 0,

x: 0.1,

y: -0.1

}

], features=[!software.chronicle.services.demo.example13.dto.Feature {

eventId: "",

eventTime: 0,

x0: 0.1,

x1: -0.1

}

], hiddenLayer0s=[!software.chronicle.services.demo.example13.dto.HiddenLayer0 {

eventId: "",

eventTime: 0,

v0: -0.9038752622429152,

v1: -0.8324304514645002,

v2: 0.8606898704014198,

v3: -0.8677752290732312

}

], hiddenLayer1s=[!software.chronicle.services.demo.example13.dto.HiddenLayer1 {

eventId: "",

eventTime: 0,

v0: 0.9303880924551851,

v1: 0.9536078125314426

}

], classifications=[!software.chronicle.services.demo.example13.dto.Classification {

eventId: "",

eventTime: 0,

c: 0.9998653695957538

}

]}