"Chronicle FIX handles billions of price ticks per week, delivers consistent low latency, and never misses a beat"

- Paul Scott, Head of EFX, ANZ

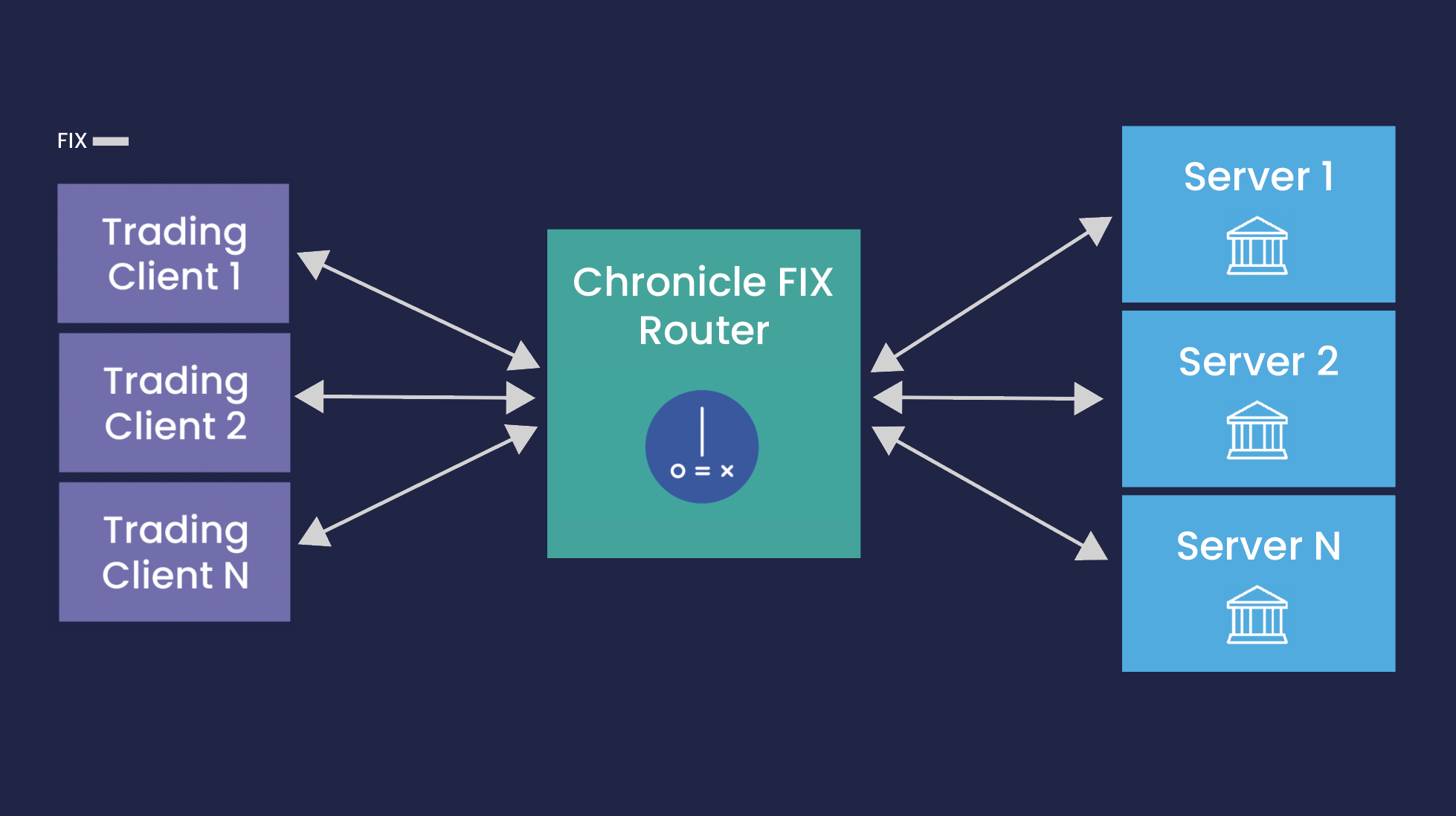

CHRONICLE'S FIX ROUTER

Flexibly Scale and Manage Distributed FIX Environments

Chronicle's FIX Router routes FIX messages, based on routing rules, between FIX engines. The FIX router is included as part of the Chronicle FIX product and is highly configurable, using a natural rule syntax to manage the flow of FIX messages. The key features are:

- Dynamic load balancer allowing distribution of messages across a pool of FIX gateway servers. This allows vertical scaling of your server capacity.

- Standardised connection surface to insulate clients from backend server configuration and changes.

- Manage server update risk by flexibly routing only a subset of flow on and off newly updated servers.

- FIX version translator making sure the trading clients can connect even if using other versions of the FIX protocol.

- Rule-based drop-copy functionality makes it possible to tap off selected pieces of information to other services.

Chronicle FIX in Action

Chronicle has supplied trading solutions to many top tier financial institutions. Read one of our case studies to understand what you can achieve with Chronicle’s low-latency FIX Engine.

Highly Optimised DMA Solution

A large global bank asked Chronicle for a solution that optimised the performance within their Equity Trading Group. Chronicle delivered a high throughput, low-latency solution that exponentially improved both the execution and accuracy for their clients while integrating with the existing infrastructure. Learn more >>

Quick ROI on EFX Trading Platform

Chronicle built a bespoke EFX trading platform for a top-tier bank. By leveraging Chronicle FIX and EFX microservices the system was deployed within six months. By then, message throughput was increased up to ten times allowing a wider range of strategies that utilise the whole book. Learn more >>

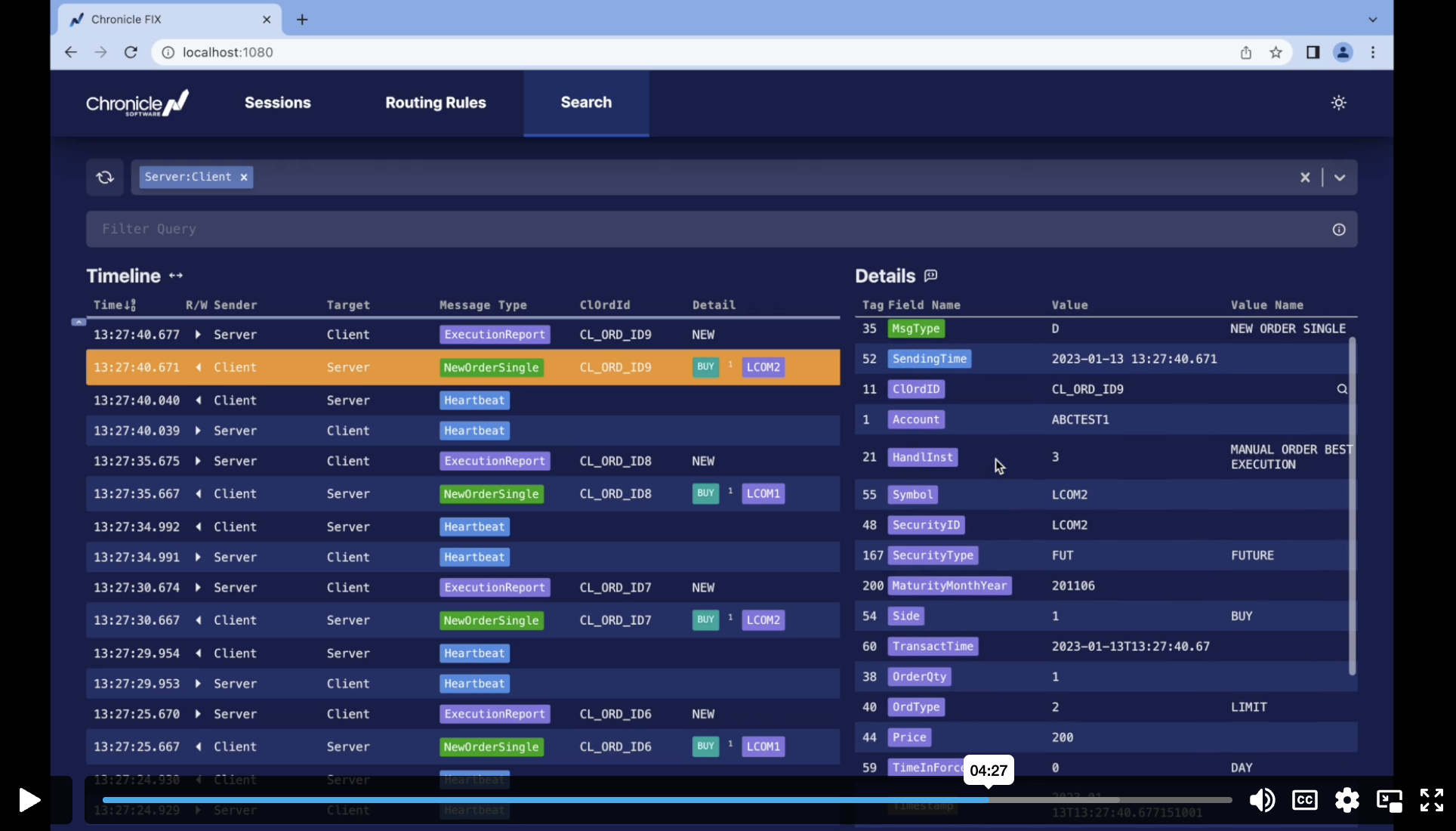

CHRONICLE'S VISUAL FIX PARSER

Facilate Analysis of FIX Messages

Chronicle provides a free FIX parser that can facilitate the analysis of FIX messages by presenting them in a human readable format.

Chronicle FIX UI

Chronicle FIX Engine now comes equipped with a new user interface. The new interface features a more intuitive layout and improved navigation, making it easier for users to access their FIX sessions and includes the ability to:

- Manage sessions (such as resetting sequence numbers)

- Edit sessions

- Search through FIX messages

For more information on these features, watch our introductory video:

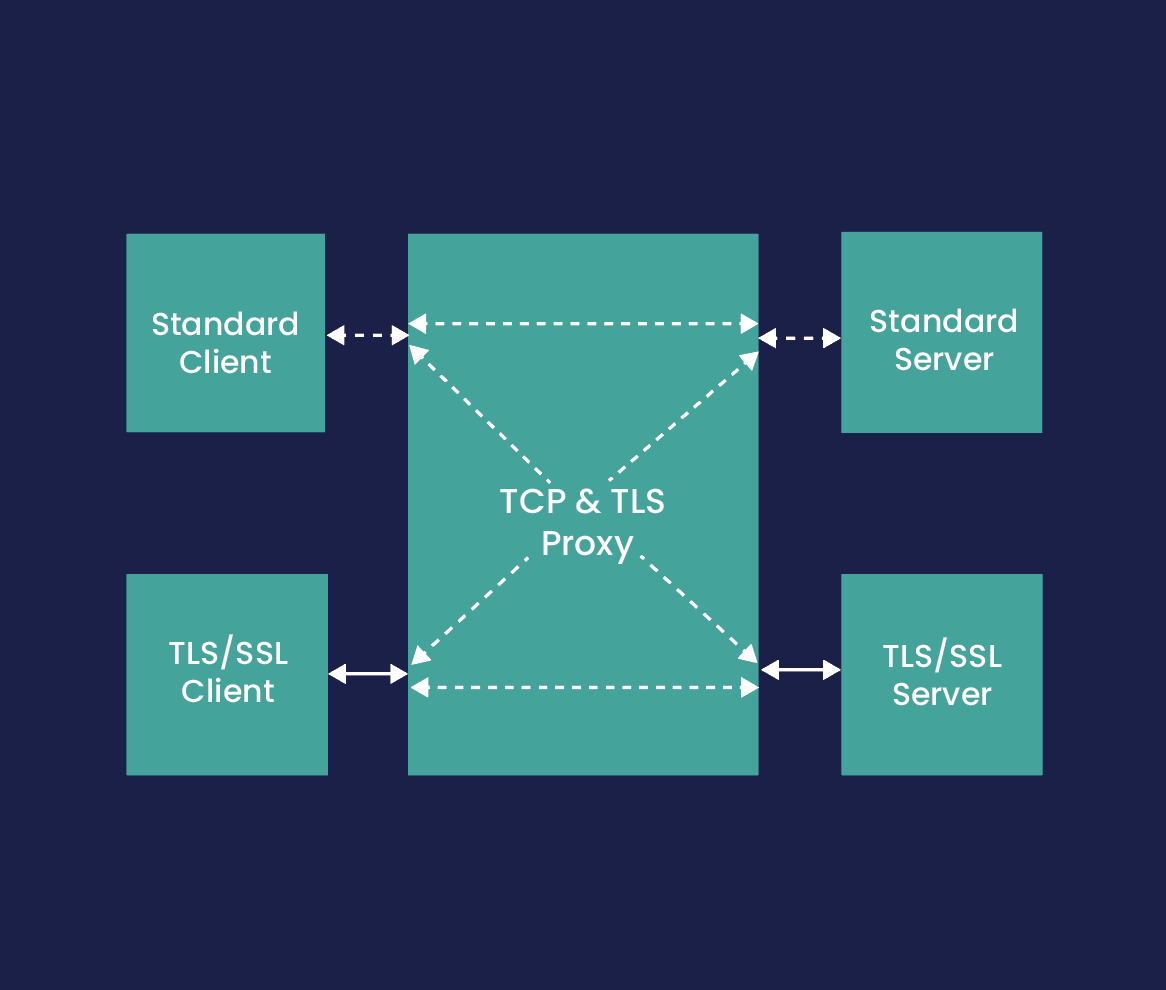

LIGHTWEIGHT PROXY

Secures Your FIX Communication

As an optional feature to Chronicle FIX we provide a lightweight TCP and TLS Proxy. Key features are:

- Multiple FIX/TCP Sessions - manage concurrent connections, routing from multiple listening ports to multiple downstream destinations

- Configurable Routing Policies - multiple policies supported, including Round Robin, Sticky Round Robin, “Blue-Green” partitioning

- FIX Message Routing - message routing based on Sender/TargetCompID pairs

- FIX Message Validation - messages can be validated before passing to a downstream FIX server

- TLS Support - connections can be configured to initiate and/or terminate TLS for encryption and authentication

- Network Segmentation - can be installed in DMZ to satisfy network security requirements

- High Availability - transparent failover to backup servers/replica queues

- Low Overhead - less than 100ns plus additional TCP hop

Licensing and Support

At Chronicle we pride ourselves on the stability and knowledge of our development and support team. With decades of experience from the financial industry, you can trust us with your toughest challenges.

Complete Control of the Code

All customers get full access to our GitHub repository where you can read, fork, and create pull-requests on the Chronicle FIX source code. This means you have complete control over your environment and the freedom to continually optimise your trading workflows.

Get Expert Help

Our expert consultants are ready to support you with any issues that may arise. We understand that the FIX Engine is usually only a small part of a system, and our experienced developers can help with architecture and latency issues in other areas if needed.

Simple Licensing Model

Our simple and transparent licensing model provides certainty and ensures that Chronicle’s low-latency FIX Engine is available to businesses of all sizes, from the largest bank to the smallest hedge fund.

Articles about Chronicle FIX

Introduction to Chronicle Services Traditionally, low latency trading systems were developed as monolithic applications in low level languages such as C++. While these systems delivered the required performance, the development effort was extremely time consuming,…

by Sarah Butcher 23 November 2020 eFinancial News The accepted wisdom has it that if you’re building a high speed trading system you probably want to use C++ instead of Java: it’s closer to the metal and is…

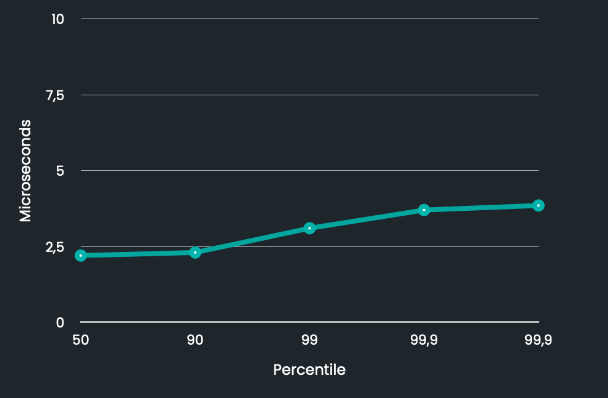

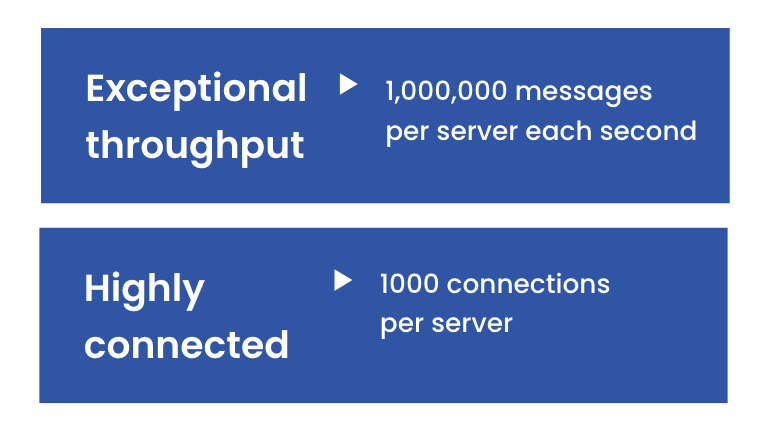

Overview The FIX Trading Community web site has posted a list of common questions about FIX Engines. This page brings together our answers for these questions. For more details on Chronicle FIX Capabilities/throughput Chronicle FIX supports; 100,000…